This week I put together a GitHub project with two notebooks I used to generate clouds for Suntime. If you’re familiar with Python and want to play around with training and deploying neural networks onto your iPhone, check it out!

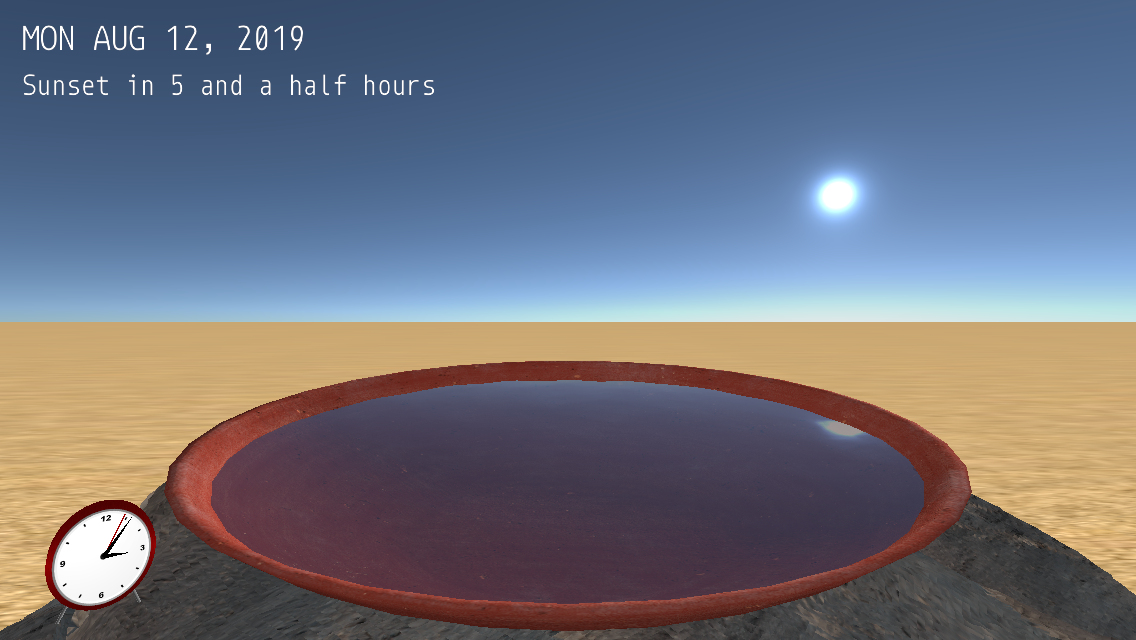

Sometime last year I was playing with different ways to make computer-generated clouds. Back then, Suntime was literally a magical bowl in the desert:

It turns out there are a lot of ways to go about creating clouds. Googling around for “procedural volumetric clouds” will get you a lot of links. As will a search for “noise clouds”.

These approaches looked good in theory, but produced repetitive-looking results. I found myself wanting clouds with more pizazz. More colors, more mysterious shapes. More curation / control over how they’d look to match the tone of the app.

Since I had an interest in training neural networks, and a desktop with an underused video card, it seemed like a Generative Adversarial Network, aka GAN, might be a fun solution to my made-up problem.

Voila, clouds!

If you’re not familiar with GANs, they’re a type of machine learning model. For an example of what they can do, check out this person does not exist for not-at-all creepy AI-generated people.

To grossly oversummarize what’s going on, you provide reference images and use them to train two neural networks: one to generate images, and another to detect fake images. These “AIs” learn by competing with each other, and you end up with a model that can generate images that look a lot like whatever you’ve trained it with.

My model needed to run on iOS devices, so it needed to be small (as in filesize: some models get very large), and simple enough to run well on the old iPhone SE I was using. I ended up training a very simple DCGAN model. It generates 128x128 pixel color images.

Here it’s making flowers:

Abstract landscapes:

And of course faces:

These images look pretty rough-hewn, but with a little post-processing on the phone they make for some pretty scenes. Here’s are a couple scenes from the current app:

For some state-of-the-art weirdness, check out NVidia’s StyleGAN2 presentation:

— Andrew